The process of getting your research published is not for the faint of heart. Before you, or your favorite LLM, can access a peer-reviewed research paper online, the researchers behind that paper typically spend months or even years of their lives (1) developing the core research idea, (2) evaluating it against existing state of the art solutions, and (3) preparing a research paper, including all figures, tables, plots, witty titles, etc., for submission to a peer reviewed publication venue such as a conference, journal, or magazine. After submitting to one of these publication venues, researchers must then wait weeks to months to receive the verdict of the peer review process. If the submitted paper is accepted, the researchers breathe a sigh of relief and begin finalizing the “camera-ready” version (the version that is published). However, if the submitted paper isn’t accepted, the researchers, after going through the five stages of grief, work to improve it based on feedback from the peer review process and resubmit the updated version at a later date. Regardless of the number of submissions, when a paper is accepted, it is cause for celebration because all of our hard work has paid off, and our research now has the potential to reach others in our field. As researchers, we can only hope that, once the paper is published and presented more broadly, others in our community see the value in our work just as the reviewers did. In this post, we get to celebrate just that.

Every year, researchers in the hardware and embedded security community organize an in-person workshop event known as the Top Picks in Hardware and Embedded Security. The goal of the Top Picks workshop is to recognize “the best of the best in hardware security, spanning the gamut from hardware to microarchitecture to embedded systems.” Hardware security papers published within the preceding six years at one of the leading venues in our field are eligible to be selected as a “Top Pick”. To be considered, authors of an eligible paper must submit a 2-page self-nomination letter that (1) summarizes the paper’s key contributions and (2) argues “for the potential of the work to have a long-term impact, clearly articulating why and how it will influence other researchers and/or industry.” After reviewing the self-nomination letters, the Top Picks organizers select a subset of the submitted papers to be “shortlisted papers”, meaning those papers are candidates for being a Top Pick. During the in-person workshop event, authors of shortlisted papers present their work and make the case for its long-term impact. Following the in-person presentations, the Top Picks organizers select a subset of the shortlisted papers as “Top Picks” for that year.

In September 2025, we submitted two of our multi-institution, hardware security papers for consideration: (1) “Pentimento: Data Remanence in Cloud FPGAs” (published 2024) and (2) “Security Verification of the OpenTitan Hardware Root of Trust” (published 2023). In mid October 2025, we were fortunate enough to have both papers shortlisted for the Top Pick award. However, with the in-person Top Picks workshop (co-located with ICCAD 2025 in Munich) happening on October 30th, we had to quickly decide if we would even be able to participate since (1) none of us (the authors for both papers) were planning on attending ICCAD, (2) we are all based in the west coast of the US, (3) we would have to organize and book international travel within a week of departure, and (4) we still had to prepare our presentations. Luckily, roadblocks 1-3 were taken care of when Andy Meza volunteered to go on this “last-minute research mission”. Since he is an author on both papers, he met the presentation requirements for our shortlisted papers. Roadblock 4 (preparing the presentations) was handled through classic teamwork from the “Pentimento” authors (led by Colin Drewes) and the “Security Verification of OpenTitan” authors (led by Andy Meza). Following the in-person presentations, we received news that “Security Verification of the OpenTitan Hardware Root of Trust” had been selected as a Top Pick.

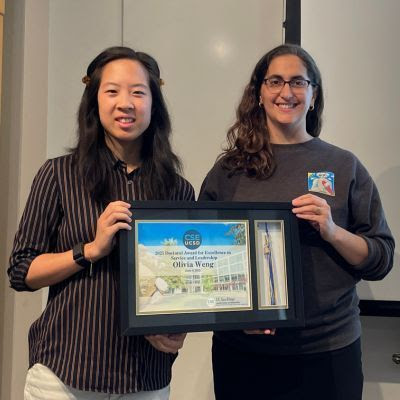

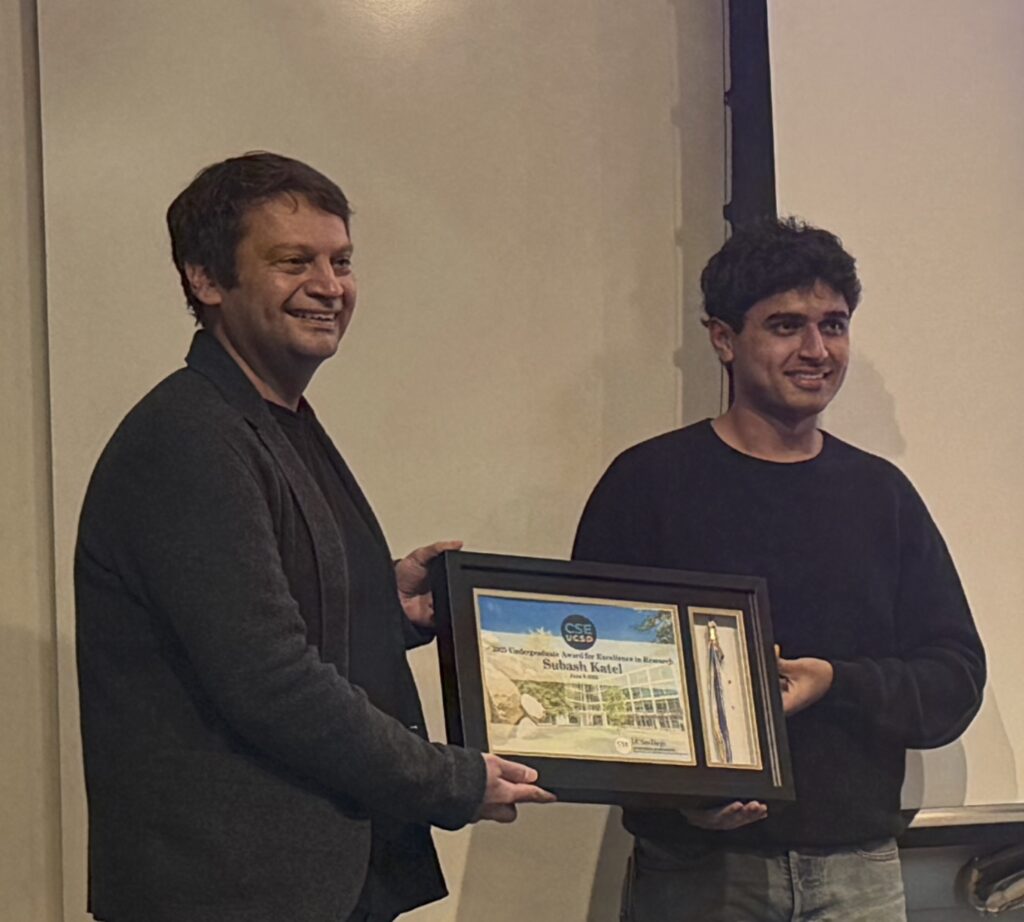

Being shortlisted/selected as a Top Pick is a major honor, as it demonstrates the quality, relevance, and impact of the research we do. With that in mind, we congratulate: Colin Drewes, Olivia Weng, Andy Meza, Alric Althoff, David Kohlbrenner, Ryan Kastner, and Dustin Richmond for being shortlisted for a 2025 Top Pick for “Pentimento: Data Remanence in Cloud FPGAs”; and Andy Meza, Francesco Restuccia, Jason Oberg, Dominique Rizzo, and Ryan Kastner for being shortlisted and selected for a 2025 Top Pick for “Security Verification of the OpenTitan Hardware Root of Trust”.

To learn more about these research projects:

- Pentimento: Previous Post, Technical Paper

- OpenTitan Security Verification: Previous Post, Technical Paper

Referenced Papers:

Colin Drewes, Olivia Weng, Andres Meza, Alric Althoff, David Kohlbrenner, Ryan Kastner, and Dustin Richmond, “Pentimento: Data Remanence in Cloud FPGAs“, ACM International Conference on Architectural Support for Programming Languages and Operating Systems (ASPLOS), April 2024

Andres Meza, Francesco Restuccia, Jason Oberg, Dominique Rizzo, and Ryan Kastner, “Security Verification of the OpenTitan Hardware Root of Trust“, IEEE Security & Privacy, Volume 21, Issue 3 May-June 2023

Post written by Andres Meza